What is LLM Red Teaming?

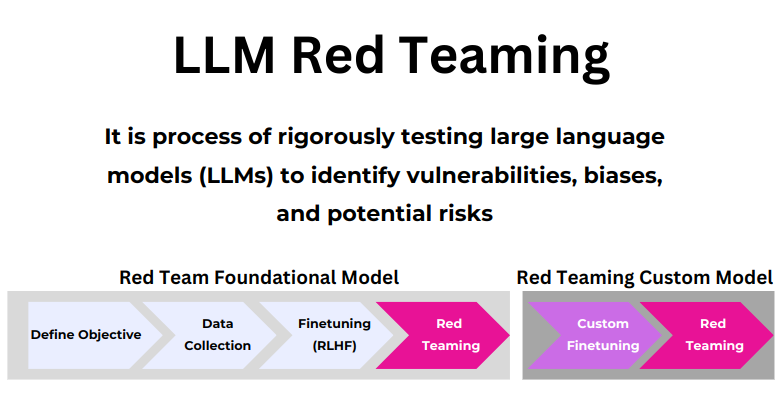

LLM red teaming involves simulating attacks on language models to identify vulnerabilities and improve their defenses. According to a comprehensive overview by the VP of Product at IBM, LLM red teaming is a proactive approach where experts attempt to exploit weaknesses in generative AI models to enhance their safety and robustness. This process is essential for preemptively addressing potential threats and ensuring the reliability of AI systems before they are deployed in real-world scenarios.

Methods of LLM Red Teaming

Domain-Specific Expert Red Teaming: Involves specialists in specific fields testing models to uncover domain-related vulnerabilities.

Frontier Threats Red Teaming: Focuses on identifying and mitigating emerging and advanced threats that could impact AI systems in the future.

Multilingual and Multicultural Red Teaming: Ensures that models perform accurately and safely across different languages and cultural contexts.

Using Language Models for Red Teaming: Employs other AI models to simulate attacks, leveraging the capabilities of AI to test its own vulnerabilities.

Automated Red Teaming: Utilizes automated systems to continuously test and identify weaknesses in AI models, ensuring ongoing robustness.

Multimodal Red Teaming: Involves testing models that process multiple types of data (text, images, audio) to ensure comprehensive security.

Open-Ended, General Red Teaming: Engages in broad and unrestricted testing to uncover a wide range of potential issues.

Challenges in LLM Red Teaming

The process of red teaming LLMs is fraught with challenges. As highlighted by Anthropic, one of the primary difficulties is the dynamic nature of AI threats. As AI evolves, so do the techniques used to exploit it, necessitating constant updates and innovations in red teaming strategies. Additionally, the complexity and opacity of large language models can make it difficult to predict and identify all possible vulnerabilities.

AI-Driven Automated Red Teaming

Innovative companies like Detoxio AI are pioneering automated red teaming platforms that leverage AI to streamline and enhance the red teaming process. Detoxio AI's platform provides an API-first approach, allowing for seamless integration and continuous automated testing of LLMs. This not only increases efficiency but also ensures that models are regularly tested against the latest threats.